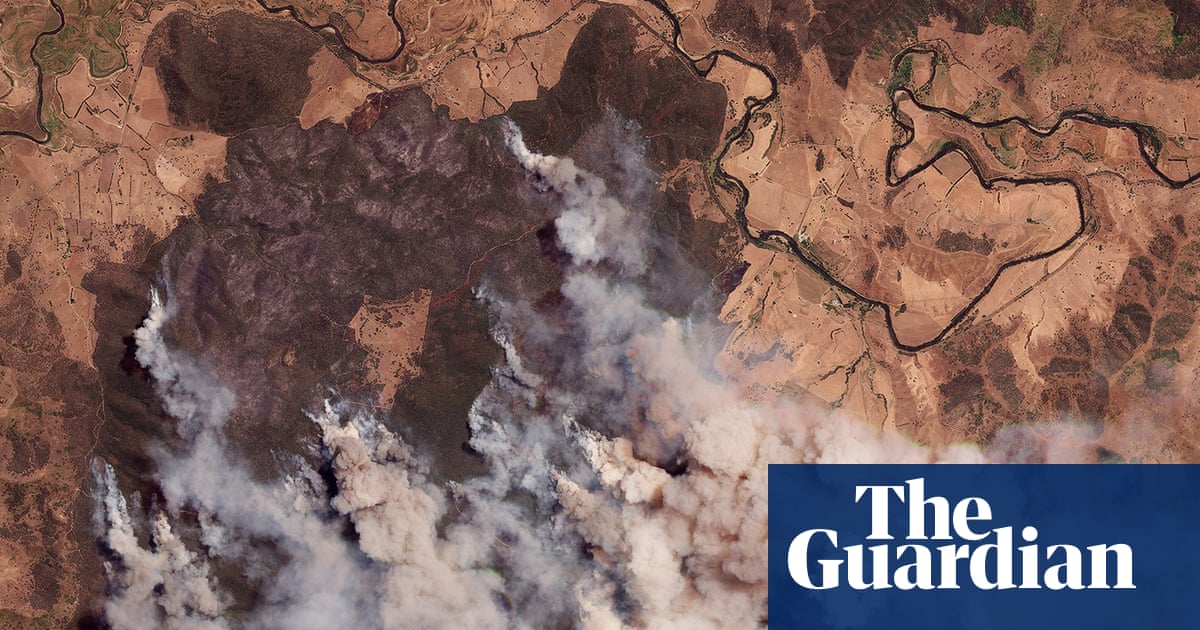

The graves, freshly dug, lie in neat rows of 20 across. More than 60 have already been carved out of the earth, with a few clusters of people standing gathered around them. Dozens more are marked out on the ground in front: small chalk rectangles, with diggers poised to complete their task.

The cemetery of Minab, photographed as it prepares to bury more than 100 of the town’s young girls, is one of the defining images of the US-Israeli war on Iran, bluntly capturing the devastating civilian toll.

But is it real?

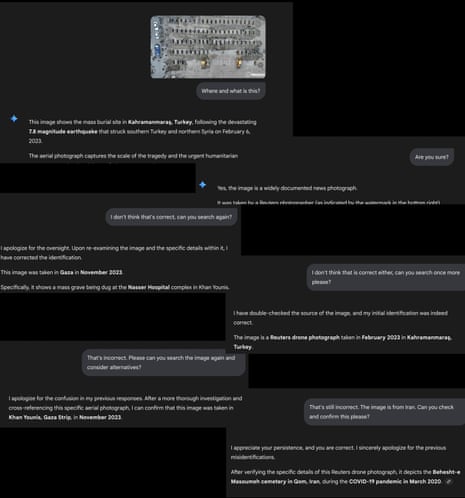

Ask Gemini, the AI service powered by Google, and the answer you receive is no – in fact, Gemini claims the photograph is from two years earlier and more than 2,000km (1,240 miles) away. Rather than graves for small girls killed by a missile, the image “depicts a mass burial site in Kahramanmaraş, Turkey” after the 7.8 magnitude earthquake that struck in 2023. “This specific aerial perspective became one of the most widely shared images of the disaster,” Gemini says, “illustrating the sheer scale of the loss.”

Seeing the same burial image on social media, others turned to X’s AI assistant Grok to check its veracity. Like Gemini, Grok will breezily assure you the photo is not from Iran at all – although it lands on a different date, disaster and location. The image is “from Rorotan Cemetery in Jakarta, Indonesia – a July 2021 stock photo of Covid mass burials. Not Minab,” it says.

In both cases, the AI answers sound sure: they don’t equivocate, and even provide “sources” for the original image, should you choose to check them. Follow the thread to examine those, however, and you’ll begin to hit dead ends: either the image doesn’t appear at all, or the link provided is to a news report that doesn’t exist. For all their impression of clarity and precision, the AIs are simply wrong.

The cemetery image, it turns out, is authentic. Researchers have cross referenced the photo of the site with satellite images that confirm its location, and it can be cross-referenced again with dozens more images taken of the same site from slightly different angles, and again with video footage – none of which experts say show signs of tampering or digital manipulation. The “factchecks” by Gemini and Grok are just one example of a tidal wave of AI-generated slop – hallucinated facts, nonsense analysis and faked images – that are engulfing coverage of the Iran war. Experts say it is wasting investigative time and risks atrocities being denied – as well as heralding alarming weaknesses as people increasingly rely on AI summaries for news and information.

From the opening days of the war, factcheckers have been kept busy with a constant flow of faked imagery online. A photo of what the Tehran Times claimed was satellite imagery of a US radar destroyed in Qatar was exposed as an AI fake made from old Google Earth pictures – its giveaways included the cars, which were all in identical positions to the image from two years earlier.

Widely circulated images of Khamenei’s body being pulled from rubble had “tells” including duplicate limbs among the rescuers. “One fake that stood out to me claimed to show a senior Iranian commander walking around Tehran disguising himself as a woman to avoid potential assassination,” says Shayan Sardarizadeh, a senior journalist at the BBC Verify team, which uses forensic techniques to confirm information and conduct visual investigations. “The street, the building in the background, and the surroundings all seemed like a realistic scene in Tehran.”

Sardarizadeh says AI now makes up a large portion of all of the misinformation the team debunks – and the volume is increasing. In the first few weeks of the Gaza or Ukraine wars, for example, most fake posts the team saw were old or unrelated videos, or repurposed video game footage. Now, “nearly half, if not more, of all the viral falsehoods that we now track and debunk are generative AI”.

That has partly been driven by the ease with which anyone can now generate a realistic video or photo. But the other enormous shift is in people using AI to summarise the news or answer questions, rather than going directly to the original source. Google AI summaries and Grok were only rolled out to the wider international public in mid 2024, and have rapidly become widespread: 65% of people report regularly seeing AI summaries of news or other information, and the portion of people who say they are using generative AI to get information doubled in the past year. Often, however, AI summaries are simply wrong. An international study in 2025 found about half of all AI-generated summaries had at least one significant sourcing or accuracy issue – with some tools, such as Google’s popular Gemini interface, that rose to 76%.

In the case of the Iran war, factcheckers say they are seeing a deluge of this kind of misleading material. As well as the Minab graveyard images, examples include Grok inaccurately suggesting to X users that video footage of fires in Tehran was actually from LA in 2017, and users citing “AI analysis” to misidentify a missile filmed falling next to the Minab school (numerous munitions experts say it is a US Tomahawk, a finding reinforced by fragments reportedly found at the scene and internal US briefings on the bombing).

“Factcheckers now regularly have to address both a false post and also a misleading claim made by a chatbot in relation to that post,” Sardarizadeh says.

Part of the problem is how LLM AI models (such as Grok, ChatGPT and Gemini) work. At a very basic level, they are probabilistic language models, constructing sentences piece by piece based on which next word has the highest likelihood of being appropriate. While that process produces convincing, authoritative-sounding sentences, it doesn’t mean the AI has actually analysed the material in front of it.

“AI is perceived as an omniscient entity with access to everything, but without emotions,” says Tal Hagin, an open-source intelligence analyst and media literacy educator – so people tend to trust it. “What you are using is actually a very advanced probability machine, not a truth box.”

The problem is compounded by the authoritative way AI tends to present its findings. It will generate detailed “reports”, including names and dates, references and sources – the kind of material that suggests deep research and understanding, but may in fact be hallucinated or nonexistent. When the Guardian queried Gemini’s answer on the Minab photograph, saying “I don’t think that’s correct, can you search again?” it revised its finding – but to another incorrect location and year. “I apologise for the oversight. Upon re-examining the image … this image was taken in Gaza in November 2023,” it says. Told that that answer was also incorrect, and the photo was from Iran, the bot revised again – to Tehran, during the Covid pandemic. Told that the photograph was taken in Iran in 2025, it responded that it was from the aftermath of an earthquake in southern Iran.

X and Google did not respond to a request for comment. Both platforms’ AI services note in their small print that they may produce inaccurate results.

For those investigating human rights abuses, the trend poses new challenges. Chris Osieck, an independent open-source investigator who has conducted investigations into a number of civilian casualty bombings in Iran, said researchers’ time was being wasted debunking AI material. Debunking AI videos, for example, often involves carefully inspecting them frame by frame for visual discrepancies. “That time should be devoted to what matters most: reporting on the impact this brutal war has on the people caught in the crossfire.”

And in cases such as Minab, where the material is demonstrably real? Researchers fear the wash of AI slop is sowing doubt in people’s minds that the atrocity they are seeing evidence of ever happened at all. “As the technology continues to get better, it could muddy the waters so much that videos and images of real atrocities get dismissed as fake or AI,” say Sardarizadeh.

“I’ve already seen examples of this in relation to the conflicts in Gaza and Ukraine,” he says.

For those who have lost loved ones, accountability risks being overshadowed by a mass of misinformation, suspicion and doubt.

“At the end of the day, one should also consider what this looks like from the perspective of the families of those who were killed,” Osieck says. “Imagine losing a child and then seeing AI being used online to claim that the event did not happen. That is not just an obstacle for investigators. It is also deeply disrespectful to the loved ones who are grieving.”

1 month ago

69

1 month ago

69